What is the data index and why is it needed?

Requirements for the data index

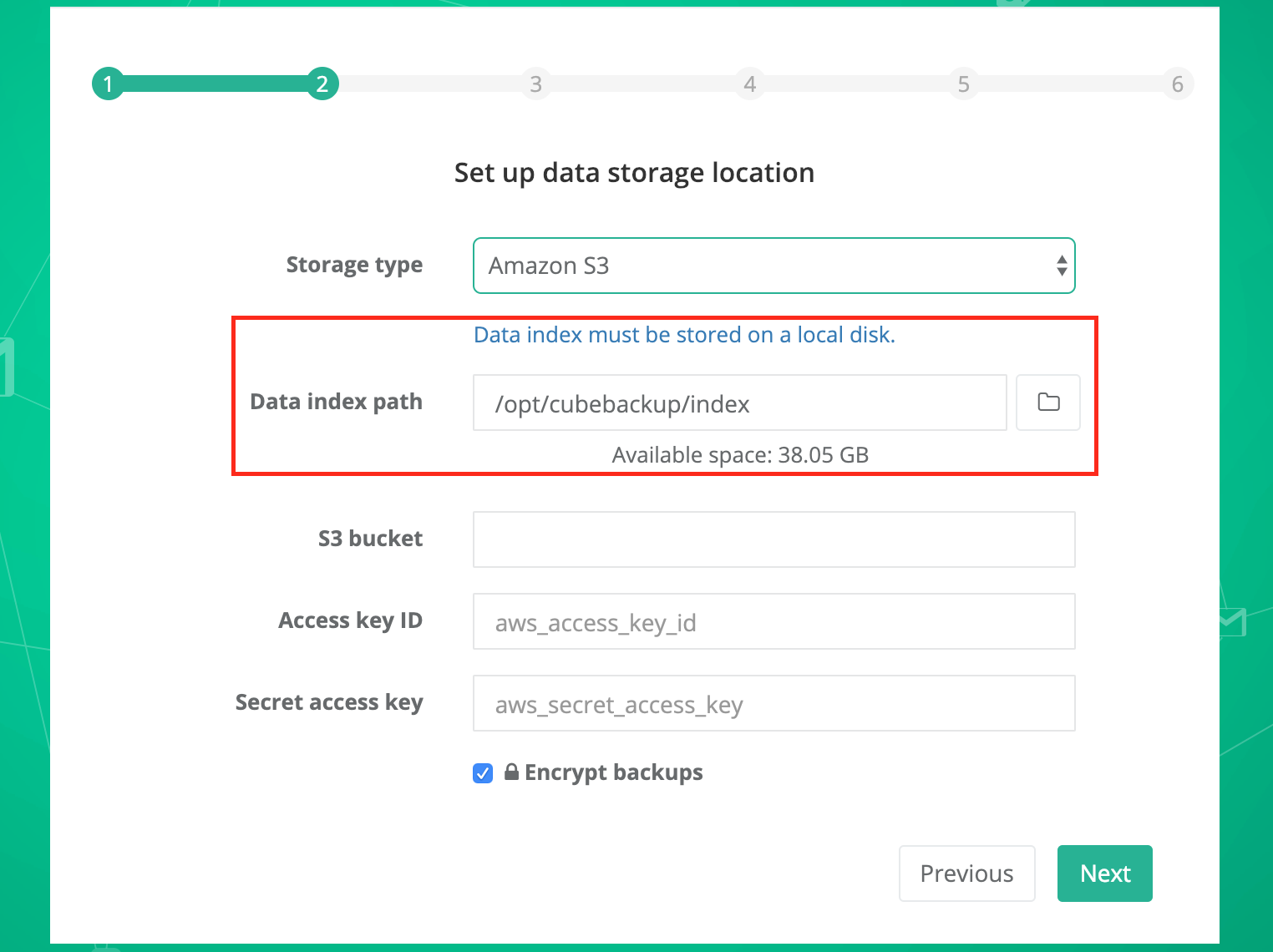

When you choose the storage location for your Google Workspace or Microsoft 365 backup data, the setup wizard will ask for a local directory for the data index. Please note:

- The data index path must be located on an actual local disk, not on mounted network storage.

- High speed local storage, like an SSD, is strongly recommended. Storing the data index on an SSD can greatly improve the backup speed.

Why is the data index needed?

The data index contains a part of the metadata for your backup, including SQLite database files, configuration files, and other information used in the backup process.

Data indexes are required for performance reasons. CubeBackup must perform substantial read and write operations on the SQLite database in order to track backup data, especially file and folder revisions. SQLite is designed to be a local database, and may have performance and integrity problems when accessed through remote storage, especially in a multi-threaded environment. That is why this metadata must be stored on a local drive.

The backup process relies heavily on reading/writing the data index, plus CubeBackup backs up Google Workspace or Microsoft 365 accounts in parallel, meaning that in most cases, more than 10 users are backed up concurrently, so the data index can easily be a bottleneck in the backup process. Based on our tests, storing the data index on an SSD can greatly improve the backup speed.

Size of the data index

The data index for each user is about 200MB on average, so be sure there is enough free space on the disk to hold current and future metadata. In general, we recommend that the partition should have no less than 100GB of free space.

The data index acts as a cache

The data index will be eventually copied to the backup location during the backup process within 24 hours.

- In the unlikely event that these SQLite files/metadata are deleted accidentally, CubeBackup will automatically recreate them at the beginning of the next backup cycle. However, please still run the cbackup fullSync command before the next backup to ensure that the unsynced data index from the past 24 hours are not lost.

- If your CubeBackup server is shut down daily, the data index synchronization could be interrupted. To mitigate this, please set up a scheduled task to manually run the cbackup syncDataIndex command every 24 hours, ensuring that the data index is properly copied.