Why do backup speeds fluctuate so much and how can backup speeds be improved?

During a backup process, CubeBackup will display the real time speed in the web console. Some customers become concerned when the backup speed fluctuates dramatically or doesn't seem to make full use of the entire bandwidth. Here are some of the main factors that may affect the backup speed of your Google Workspace and several simple solutions you can try to optimize CubeBackup performance.

Factors affecting the backup speed

Number of concurrent users.

CubeBackup for Google Workspace runs the backup jobs in parallel and works most quickly when it is able to backup 4~6 users simultaneously. When only one user/shared drive is left in the job queue, the backup speed may drop to some extent. This user/shared drive is also often the last left in the queue because it has the most data to be backed up and so will naturally take longer.

Large number of small files.

Sometimes the backup speed may seem to fluctuate dramatically. This is because CubeBackup relies on Google API requests to retrieve backup data, requiring at least one round-trip communication with Google Cloud for each item, regardless of file size. That means that a large number of small files/messages take much longer to access than a few large ones.

Index read/write speed.

In most cases, the backup speed of CubeBackup is more related to the memory and disk type of the backup server, rather than network bandwidth. CubeBackup relies heavily on the speed of read/write operations to the data index, which can easily become a bottleneck for the whole system. The data index must be stored on a local drive, preferably an SSD on the backup server. If you've configured CubeBackup with the data index on mounted network storage, please move it to a local drive as soon as possible.

Throttle setting.

CubeBackup has flexible throttling settings to help limit its effect on your network during business hours. Please note that throttling is enabled by default for instances configured to backup to on-premises storage. To run the backup at full speed, you can go to the SETTINGS page in the CubeBackup web console, find the Throttling section under the Systems tab, and simply uncheck the two boxes.

Initial full backup.

During the initial backup, CubeBackup will download all data in your Google Workspace. Depending on the total data size of all users in your company, the initial backup can last for days, or in some cases, even weeks. Fortunately, CubeBackup employs an incremental backup algorithm, so that after the initial backup, only new or modified data will be added. Therefore, subsequent backups will be much smoother and faster.

Stable network connection.

An inconsistent Internet connection can also result in many unexpected errors which will slow down the backup or even cause it to be canceled until the next scheduled attempt. Cubebackup will not discard backup progress when forced to cancel, but these disruptions can greatly affect backup performance.

Suggestions for speeding up the backup process

Store the data index on a local SSD.

A local SSD will greatly speed up the read/write operation of the data index, and improve backup performance. If you'd like to move the data index to faster storage, please follow the instructions at this doc.

Back up more users simultaneously.

If the backup server has at least 8 GB of memory, please consider extending the number of user accounts backed up in parallel.

Split the backup task between multiple backup servers.

For large organizations with thousands of users, we recommend running multiple CubeBackup instances simultaneously.

For example, you could deploy two CubeBackup instances, where all users in Department A (OU A) are backed up by instance 1 and all users in Department B (OU B) are backed up by instance 2.

Please note that the backup data for each instance must be stored in different storage locations (or separate buckets in the case of cloud storage) to avoid data conflict.Wait patiently for the backup to complete.

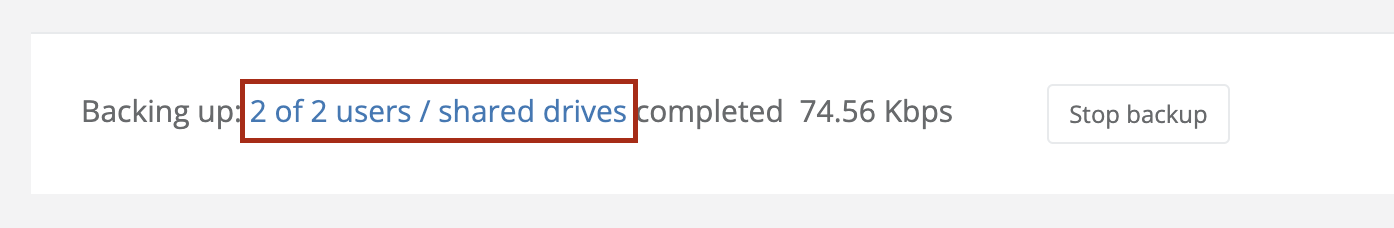

You can click on the number of completed users in the CubeBackup for Google Workspace web console to display the items currently being backed up.

Suggestion for speeding up the restore process

CubeBackup allows you to easily restore backup data with just one click and will perform most restorations smoothly and quickly. By default, CubeBackup for Google Workspace processes up to 3 restoration tasks concurrently.

However, if you are performing a large number of restoration tasks at once, you may consider extending the number of concurrent tasks to speed up the restoration process, provided that the backup server has a relatively fast upload speed and at least 8 GB of memory. Detailed instructions can be found at Set the number of concurrent restoration tasks.

Contact support and reach out to us

If you are still concerned about backup performance, please do not hesitate to contact us at [email protected] .